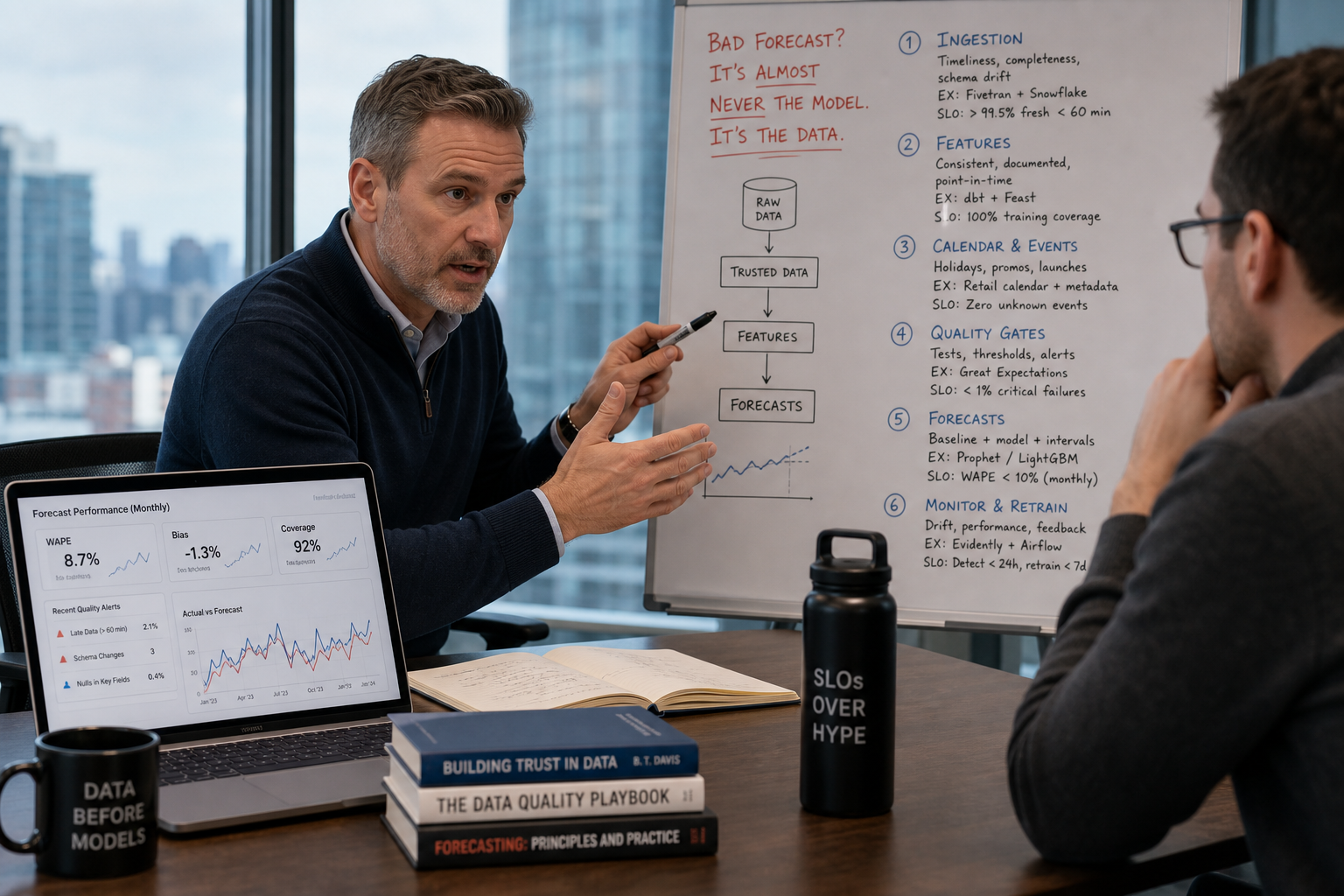

Bad forecasts are a data problem, not a modeling problem

If your forecasts explode in production, don’t retrain the model — fix the pipeline. Forecasting systems fail when ingestion drops, features shift, or backfills are missing. I’ll give a 6-step, production-first recipe with vendor picks (Databricks, Snowflake, MLflow, Prophet/LightGBM) and operational thresholds you can implement this quarter.

1) Start with data contracts and ingestion SLOs

Put a contract between producers (PLC, ERP, IoT gateway) and consumers (feature pipelines). Contracts should be machine-checkable: required fields, allowed null rates, type and cardinality. Enforce with CI and runtime checks using Great Expectations or dbt tests pushed into the pipeline.

- Minimum SLOs to set today: 99.9% ingestion uptime for streaming sources; schema-drift alert within 15 minutes; missing field rate <0.5% per hour for core telemetry.

- Tool picks: Kafka/Kinesis for streaming ingestion, Snowflake for durable raw storage, Great Expectations for checks, Databricks for downstream consumption.

- Outcome to expect: stop 60–90% of “mystery NAs” reaching features by catching schema churn at ingestion.

Why it matters: a single upstream schema change is responsible for the majority of silent forecast failures. A contract reduces mean time to detect from days to minutes.

2) Monitor data quality and expectedness, not just pipeline health

Pipeline success ≠ data correctness. Add expectedness tests that assert distributional bounds and cardinality. Use monitoring tools to track drift and alert when feature distributions move beyond thresholds.

- Tools: Great Expectations for expectations, Arize or Seldon for model input-drift monitoring, Databricks + Delta Lake for metric generation.

- Operational metric: maintain a rolling 7-day KS test p-value threshold per feature; alert on p < 0.01 or user-defined business thresholds.

- Example rule: if median sensor value shifts >20% from baseline for 30 consecutive minutes, create a high-priority incident with automated tag and rollback plan.

Numeric payoff: catch input drift before the model degrades. In our equipment-failure project, early drift detection removed false positives that previously consumed 30% of maintenance team time.

3) Build backfills and immutable lineage — assume you will need to re-run everything

Backfills are the operational soul of forecasting. Anything you can’t re-run quickly becomes tech debt that breaks forecasts.

- Requirements: scriptable backfills that can rehydrate features from raw Snowflake tables into the feature store; backfill window target: full 6–12 months in under 4 hours for mid-size deployments.

- Tools: Databricks for distributed compute backfills; Delta Lake for time travel; Feast or Tecton for feature versioning and serving.

- Lineage: track raw→feature→training snapshot with MLflow and Databricks Unity Catalog or Snowflake object tagging.

If you can't re-create exact training data, you can't diagnose why a production forecast changed. Make backfills part of your CI — run a 30-day backfill test on each release.

4) Engineer robust features and durable feature stores

Model fragility often starts with brittle features: lookups that go stale, joins that explode under cardinality change, time-leakage from misaligned timestamps.

- Rules: prefer time-aware aggregations computed in Databricks with event-time windows; persist features in Snowflake (or a feature store) with stored timestamps and TTLs.

- Feature hygiene: impute with business-sensible defaults (not zeros), include surrogates for missingness, and add upstream metadata like producer_partition and schema_version.

- Tools: Databricks + Delta Lake for feature pipelines, Feast or Tecton for serving reads, Snowflake as long-term feature archive.

Concrete metric: store one immutable feature snapshot per model version; that audit trail should let you reconstruct training data for any model within 24 hours.

5) Train ensembles, track everything with MLflow, and pick failover models 😎

Models should be treated like services. Use an ensemble that combines a simple, explainable baseline (Prophet for seasonality/intervention patterns) with a stronger learner (LightGBM) for covariate interactions.

- Why ensemble: Prophet handles calendar effects and sudden maintenance interventions; LightGBM captures nonlinear sensor interactions. Ensemble usually stabilizes errors across horizons.

- Tracking: log datasets, parameters, metrics and artifacts in MLflow. Record feature snapshots and the exact backfill used for training.

- Serving pattern: shadow-serve the ensemble; choose the baseline for failover when input quality degrades.

Prophet vs LightGBM — quick decision table:

| Use case | Prophet | LightGBM |

|---|---|---|

| Strong calendar/holiday effects | Yes | No |

| Fast retrain on small data | Yes | Moderate |

| Nonlinear interactions, many sensors | No | Yes |

| Explainability for ops | Good (trend+season) | Moderate |

Operational rule: if input-drift score crosses the predefined SLO, switch serving to Prophet-only mode and open a data incident. That keeps forecasts conservative and interpretable while ops investigate.

6) Define SLOs, runbooks, and automated failovers

Forecasts must have business SLOs tied to cost. Define SLOs for accuracy (e.g., MAPE threshold), freshness (max lag), and data quality (allowed nulls). Tie automated actions to breaches.

- Typical SLOs to adopt: forecast freshness < 5 minutes for real-time; MAPE alert when exceeding historical 99th percentile for the model; data-quality alert when required fields fail more than 0.5% in 1 hour.

- Runbook actions: auto-fail to baseline model, create paging incident to on-call data engineer, mark downstream decisions as “stale” in the UI.

- Failover: keep a simple deterministic rule-based fallback (e.g., last-observed rate with linear decay) when neither Prophet nor LightGBM can be trusted.

Business tie: on an equipment-failure engagement we shipped this exact stack and SLO strategy — it prevented $2.4M in downtime and reduced incidents by 85% by avoiding noisy forecasts and automating safe fallbacks.

Architecture (production sketch)

[IoT / ERP] --> (Ingestion: Kafka / Kinesis) --> Raw Landing (Snowflake Delta) --> Data Quality (Great Expectations)

|

v

Feature Pipelines (Databricks / Delta)

|

Feature Store (Feast/Tecton) + Feature Archive (Snowflake)

|

Training (LightGBM / Prophet) tracked in MLflow

|

Serving Layer: Seldon/Vertex/SageMaker -> Ensemble / Failover rules

|

Monitoring: Arize (drift) + Prometheus/Grafana (SLOs)

Operational checklist (quick)

- Ship a machine-readable data contract for each source this week.

- Add Great Expectations checks to all landing tables and alert on schema drift within 15 minutes.

- Implement one full backfill path: raw → features → training in under 4 hours.

- Register features and snapshots in Feast/Tecton; persist in Snowflake for audits.

- Train an ensemble (Prophet + LightGBM) and log everything to MLflow; enable Prophet-only failover.

- Define SLOs for data quality, freshness, and forecast accuracy and automate failover runbooks.

Conclusion & CTA

Need help with production forecasting? Book a free strategy call with Niche.dev.

Suggested Internal Links

- synthetic://cmouha5dg0000mh0fg9jxfbt2/indexed-content/niche-dev/data-audit-ai.md

- synthetic://cmouha5dg0000mh0fg9jxfbt2/indexed-content/niche-dev/mlops-enterprise.md

- synthetic://cmouha5dg0000mh0fg9jxfbt2/indexed-content/niche-dev/enterprise-ai-strategy.md